Hyperbolic and Non-Euclidean Geometry for LLMs

Events and News!

- ICML 2026 · HypRAG: Hyperbolic Dense Retrieval for Retrieval Augmented Generation (PDF)

- NeurIPS 2025 HELM: Hyperbolic Large Language Models via Mixture-of-Curvature Experts - GitHub

- NeurIPS 2025 Hyperbolic Fine-tuning for Large Language Models (HypLoRA)

- KDD 2026 Hyperbolic Learning Tutorial 🔥

- KDD 2026 Geometric Learning Workshop 🔥

- NeurIPS 2025 NEGEL Workshop

- AAAI 2026 Hyperbolic FM Tutorial

- KDD 2025 Hyperbolic FM Tutorial

- WWW 2025 NEGEL Workshop

- KDD 2023 Tutorial

- Slack channel for more discussions and tracking updates!

- Awesome Hyperbolic Representation and Deep Learning Repository

Last updated: May 28, 2026

Introduction

Foundation models, including large language models (LLMs), vision-language models (VLMs), and large multimodal models, are pre-trained on massive datasets and have demonstrated remarkable success across diverse downstream tasks. Models such as GPT-4, LLaMA, CLIP, and Gemini have pushed the boundaries of natural language understanding, visual reasoning, and cross-modal generation. Despite these achievements, recent studies have identified three fundamental limitations rooted in their reliance on Euclidean geometry:

- Limited representational capacity for hierarchical and structured data, where shallow Euclidean embeddings struggle to preserve tree-like relations.

- Lower adaptability when fine-tuning on tasks with inherent geometric structure, leading to suboptimal transfer on knowledge- and reasoning-intensive benchmarks.

- Less efficient scaling, since the polynomial volume growth of Euclidean space cannot match the exponential branching of real-world hierarchies, forcing the use of high-dimensional embeddings.

These shortcomings raise a critical question:

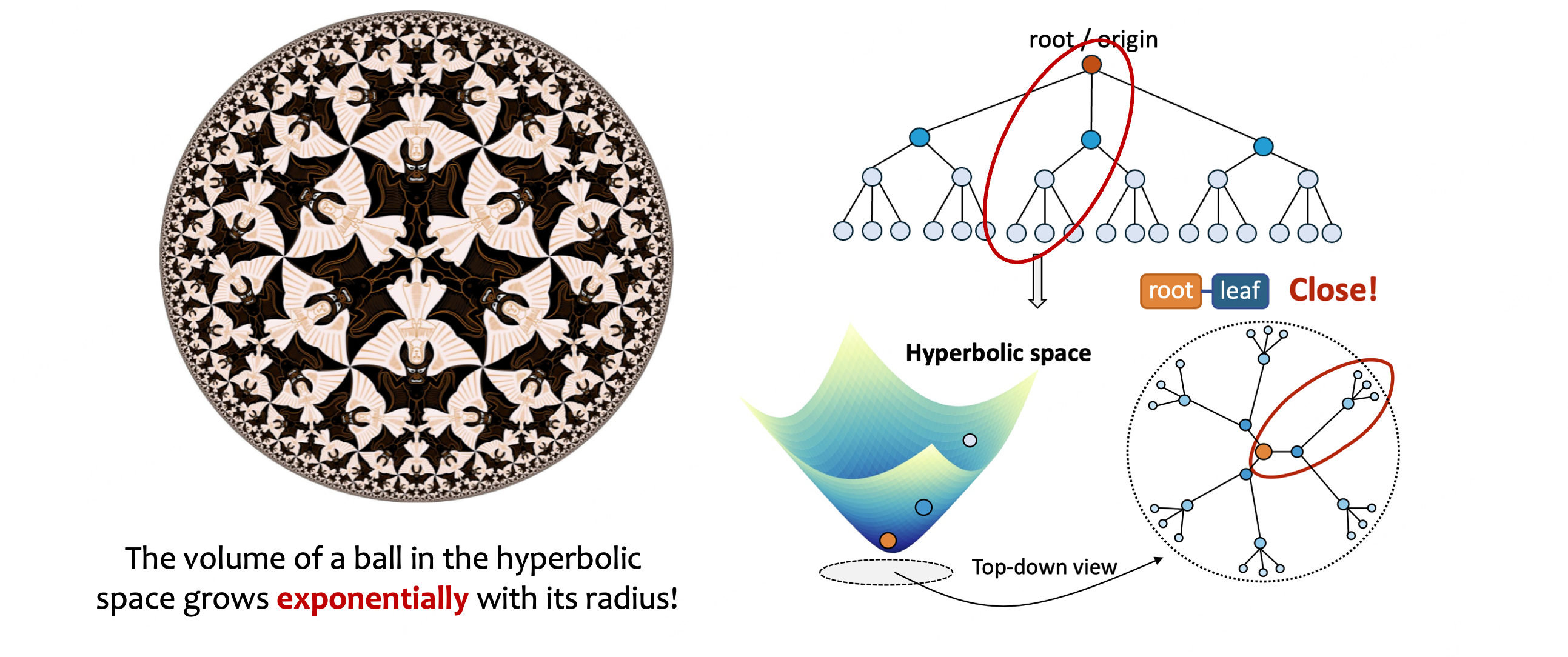

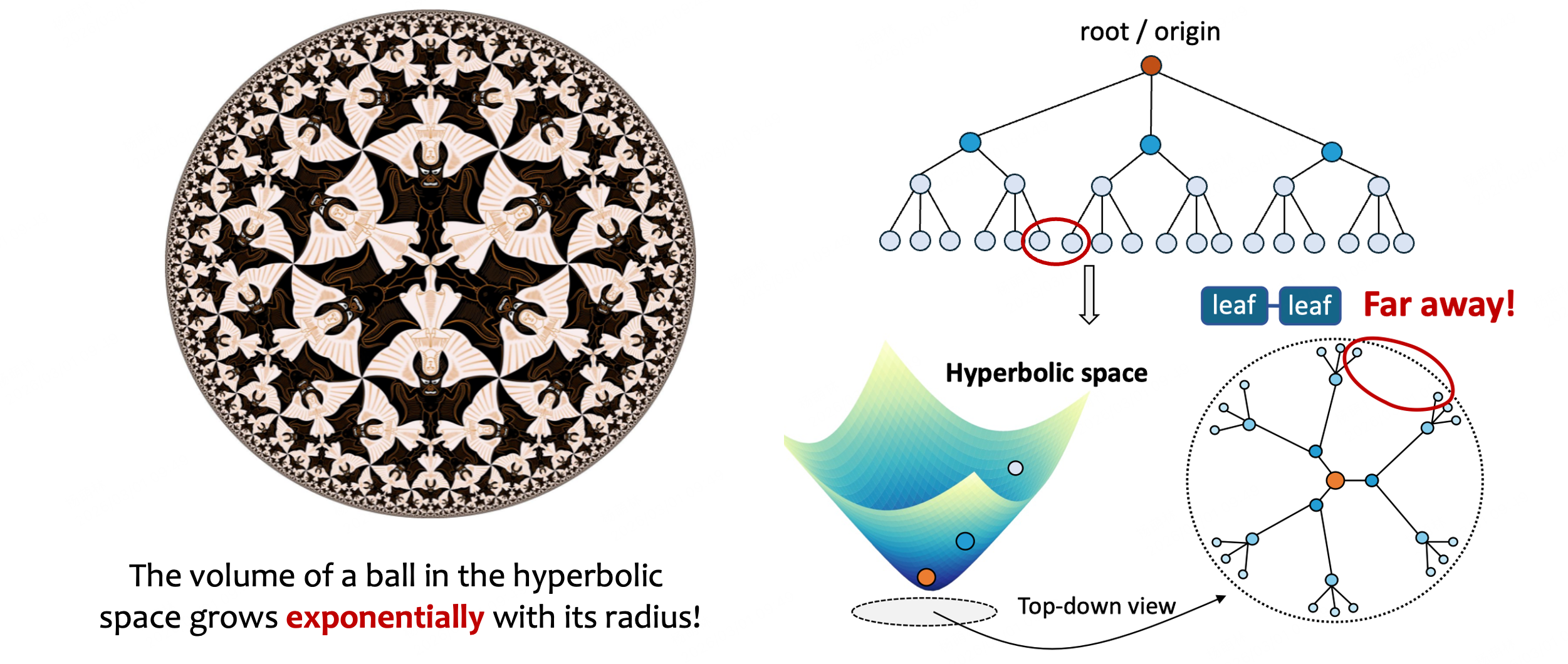

Consider a simple example. Natural language inherently contains hierarchical structures, where words compose phrases, phrases compose sentences, and sentences compose paragraphs. Knowledge graphs likewise encode taxonomic relationships (e.g., “animal → mammal → dog → poodle”) that grow exponentially with depth. In Euclidean space, representing a balanced binary tree of depth $d$ with low distortion requires $\mathcal{O}(2^d)$ dimensions, whereas in hyperbolic space the same tree can be faithfully embedded in just 2 dimensions [Sarkar, 2011]. This exponential advantage motivates the exploration of hyperbolic geometry as a foundational building block for modern AI systems.

This webpage provides a comprehensive overview of hyperbolic geometry and non-Euclidean representations for large language models and foundation models. We cover the mathematical foundations, key computational models, neural network architectures, and state-of-the-art methods that bring hyperbolic geometry to modern AI.

1. Hyperbolic Geometry

Hyperbolic geometry represents one of the most profound developments in mathematical history, emerging from the centuries-long quest to understand Euclid’s fifth postulate (the parallel postulate). For over two thousand years, mathematicians attempted to derive the parallel postulate from the other four axioms. It was not until the 19th century that Lobachevsky, Bolyai, and Gauss independently discovered that rejecting the parallel postulate leads to a consistent, non-Euclidean geometry, namely hyperbolic geometry.

Unlike Euclidean geometry, where exactly one parallel line passes through a given external point, hyperbolic geometry permits infinitely many parallel lines through a point external to a given line. This fundamental difference creates a geometric space with constant negative curvature $\kappa < 0$, contrasting with the flat (zero curvature) Euclidean space and the positive curvature of spherical geometry.

1.1 Key Properties of Hyperbolic Space

The distinctive properties of hyperbolic spaces set them apart from Euclidean geometry in ways that are profoundly useful for machine learning:

-

Exponential volume growth: The area of a disk of radius $r$ in hyperbolic space grows as $\mathcal{O}(e^{r})$, compared to $\mathcal{O}(r^2)$ in Euclidean space. This means hyperbolic space can “fit” exponentially more content at increasing distances from the origin, precisely matching the branching factor of trees and hierarchies.

-

Triangle angle deficit: The sum of angles in a hyperbolic triangle is always strictly less than $\pi$ (180°), with the deficit proportional to the triangle’s area. Specifically, for a triangle with angles $\alpha, \beta, \gamma$ and curvature $\kappa$, the area equals $(\pi - \alpha - \beta - \gamma) / \lvert\kappa\rvert$.

-

Distance distortion: Distances grow exponentially as one moves away from the origin. In the Poincaré disk model, the metric tensor is $g_{ij} = \frac{4}{(1 - \lVert \mathbf{x}\rVert^2)^2}\delta_{ij}$, meaning distances near the boundary of the disk are dramatically stretched. A point that appears close to the boundary in visual coordinates is therefore actually infinitely far from the center.

-

Natural hierarchy representation: A regular tree with branching factor $b$ and depth $d$ has $\mathcal{O}(b^d)$ nodes. The exponential volume growth of hyperbolic space naturally accommodates such structures, enabling low-distortion embeddings with remarkably few dimensions.

1.2 Why Hyperbolic Geometry for AI?

Real-world data frequently exhibits hierarchical or scale-free structure. Natural language has syntactic parse trees, knowledge graphs form taxonomies, protein structures involve nested sub-domains, social networks display community hierarchies, and image features organize from low-level textures to high-level semantics. The Gromov $\delta$-hyperbolicity of many real-world graphs (small $\delta$ values indicate tree-like structure) confirms that these datasets are intrinsically more hyperbolic than Euclidean [Gromov, 1987; Adcock et al., 2013], providing a principled motivation for leveraging hyperbolic representations in deep learning.

2. Hyperbolic Models

To visualize and work with hyperbolic geometry computationally, mathematicians have developed several isometric models. Each model represents the same underlying hyperbolic space but offers different computational trade-offs. Understanding these models is essential for designing hyperbolic neural networks.

2.1 Poincaré Ball Model

The Poincaré ball model $(\mathbb{B}^n_\kappa, g^{\mathbb{B}})$ represents $n$-dimensional hyperbolic space as the interior of the unit ball $\mathbb{B}^n = {\mathbf{x} \in \mathbb{R}^n : \lVert \mathbf{x}\rVert < 1}$. The Riemannian metric is given by:

where $g^E$ is the Euclidean metric. This model is conformal (preserves angles but distorts distances) and is the most widely used in machine learning thanks to its intuitive visualization properties: the origin represents the “root” of a hierarchy, while branches spread toward the boundary. Key operations include the Möbius addition $\mathbf{x} \oplus_\kappa \mathbf{y}$ and the exponential/logarithmic maps that transport vectors between the tangent space and the manifold [Ungar, 2008; Ganea et al., 2018].

2.2 Lorentz (Hyperboloid) Model

The Lorentz model $(\mathbb{H}^n_\kappa, g^{\mathbb{L}})$ embeds hyperbolic space as the upper sheet of a hyperboloid in $(n+1)$-dimensional Minkowski space:

where \(\langle \mathbf{x}, \mathbf{y} \rangle_{\mathcal{L}} = -x_0 y_0 + \sum_{i=1}^{n} x_i y_i\) is the Minkowski inner product. The Lorentz model has superior numerical stability compared to the Poincaré model (avoiding the boundary singularity) and is often preferred for optimization. The geodesic distance is:

2.3 Klein Model

The Klein model represents hyperbolic space within a disk where geodesics appear as straight chords rather than arcs. While it distorts both angles and distances, it offers computational simplicity for certain geometric operations such as computing convex hulls and determining whether points lie on the same geodesic. The metric is non-conformal, making it less popular in neural network applications but valuable for geometric algorithms.

2.4 Poincaré Half-Plane Model

The upper half-plane model $\mathbb{U}^n = {\mathbf{x} \in \mathbb{R}^n : x_n > 0}$ with metric $g^{\mathbb{U}}_{\mathbf{x}} = \frac{1}{x_n^2} g^E$ offers an unbounded representation of hyperbolic space. It is particularly useful for theoretical analysis and has deep connections to complex analysis and modular forms. Like the Poincaré ball, it is conformal.

2.5 Inter-Model Mappings

All four models are isometric to one another, and explicit diffeomorphisms exist between them. In practice, researchers often perform forward computation in the Lorentz model (for numerical stability) and visualization in the Poincaré ball (for interpretability), converting between models as needed.

3. Hyperbolic Neural Networks

Hyperbolic neural networks extend deep learning architectures to operate directly in hyperbolic space, enabling more efficient representation of hierarchical data. The core insight is that hierarchical relationships demand exponentially increasing capacity at greater depths, which is exactly the regime in which hyperbolic spaces excel due to their exponential volume growth.

3.1 Hyperbolic Embeddings

Hyperbolic embeddings represent discrete objects (such as nodes in a graph or words in a vocabulary) as points in hyperbolic space. The seminal work of Nickel and Kiela (2017) demonstrated that Poincaré embeddings of the WordNet noun hierarchy could achieve superior performance with just 5 dimensions compared to 200-dimensional Euclidean embeddings. Subsequent work introduced embeddings in the Lorentz model for improved optimization stability [Nickel and Kiela, 2018].

Key advantages of hyperbolic embeddings include:

- Dimensionality reduction: Orders-of-magnitude fewer parameters for hierarchical data

- Implicit hierarchy preservation: Distance from the origin encodes hierarchical depth

- Continuous relaxation of trees: Hyperbolic space serves as a continuous analogue of discrete tree structures

3.2 Hyperbolic Neural Layers

Since hyperbolic space is a Riemannian manifold (not a vector space), standard operations like matrix multiplication and addition must be carefully redefined. Three main paradigms have emerged:

-

Tangent space approach: Map points to the tangent space at a reference point (typically the origin), apply standard Euclidean operations, and project back using the exponential map. This is computationally efficient but introduces approximation errors, especially for points far from the reference.

-

Möbius gyrovector approach: The Möbius addition $\oplus_\kappa$ and Möbius scalar multiplication $\otimes_\kappa$ provide intrinsic operations in the Poincaré ball that generalize their Euclidean counterparts. A hyperbolic linear layer can be defined as $f(\mathbf{x}) = \mathbf{M} \otimes_\kappa \mathbf{x} \oplus_\kappa \mathbf{b}$, where $\mathbf{M}$ is a learnable weight matrix and $\mathbf{b}$ is a bias in hyperbolic space.

-

Lorentz operations: Directly define neural network operations on the hyperboloid using the Lorentz inner product and parallel transport. This approach often yields better numerical stability and has become increasingly popular in recent architectures.

3.3 Key Architectures

Several foundational hyperbolic architectures have been developed:

- HGCN (Hyperbolic Graph Convolutional Networks) [Chami et al., 2019]: Extends GCNs to hyperbolic space, achieving state-of-the-art on hierarchical graph benchmarks

- HNN / HNN++ (Hyperbolic Neural Networks) [Ganea et al., 2018; Shimizu et al., 2021]: General-purpose hyperbolic feedforward and recurrent networks

- HGNN (Hyperbolic Graph Neural Networks) [Liu et al., 2019]: Message-passing framework adapted for hyperbolic geometry

- Fully Hyperbolic Neural Networks [Chen et al., 2022]: Builds every layer (linear, attention, normalization) directly on the Lorentz model via Lorentz transformations, removing the need for repeated tangent-space mappings and improving stability for deeper networks

- \(\kappa\)-GCN [Bachmann et al., 2020]: Operates in the stereographic model with learnable curvature, unifying hyperbolic (\(\kappa < 0\)), Euclidean (\(\kappa = 0\)), and spherical (\(\kappa > 0\)) geometries

3.4 Hyperbolic Activation Functions

Standard activation functions (ReLU, tanh, sigmoid) operate in Euclidean space and cannot be directly applied to hyperbolic points. Hyperbolic activation functions are typically defined by: (1) projecting to tangent space via the logarithmic map, (2) applying the Euclidean activation, and (3) mapping back via the exponential map. More recent approaches define intrinsic activations that avoid this intermediate mapping.

3.5 Optimization in Hyperbolic Space

Training hyperbolic networks requires Riemannian optimization, where gradients are computed in the tangent space and updates follow geodesics rather than straight lines. Riemannian SGD and Riemannian Adam have been developed for this purpose [Bonnabel, 2013; Bécigneul and Ganea, 2019], with the update rule:

where $\mathrm{exp}_{\mathbf{x}_t}$ is the exponential map and $\mathrm{grad}_R$ is the Riemannian gradient.

4. Hyperbolic Transformers

Transformer architectures revolutionized natural language processing through their self-attention mechanisms and efficient parallel processing of sequential data. Hyperbolic transformers extend this paradigm to hyperbolic space, particularly benefiting applications involving deeply nested linguistic structures, hierarchical relations, and multi-scale features.

The key challenge in building hyperbolic transformers is that the self-attention mechanism relies heavily on linear algebra operations (queries, keys, values via matrix multiplication, softmax normalization) that assume Euclidean structure. Adapting each component to respect hyperbolic geometry requires careful mathematical reformulation.

4.1 Hyperbolic Attention Mechanisms

The standard attention score $\mathrm{Attn}(\mathbf{Q}, \mathbf{K}) = \mathrm{softmax}(\mathbf{Q}\mathbf{K}^\top / \sqrt{d_k})$ can be reformulated using hyperbolic distance:

where $d_{\mathbb{H}}$ is the hyperbolic distance and $\beta$ is a learnable temperature. This formulation naturally emphasizes hierarchical relationships: tokens at similar hierarchical levels (similar distance from the origin) produce stronger attention scores, while cross-level attention captures parent-child dependencies.

Alternative formulations include:

- Lorentz attention: Using the Minkowski inner product \(\langle \mathbf{q}_i, \mathbf{k}_j \rangle_{\mathcal{L}}\) directly as an attention score

- Tangent-space attention: Computing standard dot-product attention in the tangent space, then projecting results back

- Hybrid attention: Combining Euclidean and hyperbolic attention heads within the same layer

4.2 Multi-Resolution Processing

One of the most compelling advantages of hyperbolic transformers is their ability to simultaneously process information at different scales. In the Poincaré disk, points near the center capture high-level, coarse-grained information (analogous to root-level concepts), while points near the boundary encode fine-grained details (analogous to leaf-level specifics). This natural multi-resolution property enables hyperbolic transformers to process hierarchical abstractions more efficiently than their Euclidean counterparts.

4.3 Hyperbolic Position Encodings

Position encodings adapted to hyperbolic geometry can better preserve hierarchical relationships between tokens. Instead of sinusoidal or learned Euclidean position vectors, hyperbolic position encodings place position information on the manifold, where the distance structure naturally encodes both sequential order and hierarchical depth. For example, in a document with sections, subsections, and paragraphs, hyperbolic position encodings can jointly capture both the linear reading order and the nesting structure.

4.4 Notable Hyperbolic Transformer Models

- HyboNet [Chen et al., 2022]: Builds attention, feed-forward, and normalization layers directly on the Lorentz model via Lorentz transformations, enabling fully hyperbolic computation without repeated tangent-space mappings

- Hypformer [Yang et al., 2024]: A fully hyperbolic Transformer in the Lorentz model that introduces foundational hyperbolic modules and a linear self-attention mechanism, enabling efficient processing of large-scale graphs and long sequences

- Mixed-Curvature Transformers [Gu et al., 2019]: Use product manifolds that combine hyperbolic, Euclidean, and spherical components, with curvatures learned per factor

5. Hyperbolic Foundation Models

Foundation models, namely large-scale systems trained on broad data and then fine-tuned for specific applications, represent the cutting edge of modern AI. Hyperbolic foundation models incorporate hyperbolic geometry into their architecture to better capture the hierarchical structures inherent in language, knowledge, and multimodal data. This forms one of the most active and promising frontiers in geometric deep learning.

5.1 Hyperbolic Large Language Models

Recent work has demonstrated that incorporating hyperbolic geometry into LLMs yields measurable improvements:

-

HypLoRA [Yang et al., 2025]: Introduces hyperbolic low-rank adaptation for fine-tuning LLMs. By performing LoRA updates in hyperbolic space, HypLoRA better captures the hierarchical nature of downstream tasks while maintaining the parameter efficiency of standard LoRA. Experiments show consistent gains on commonsense reasoning, natural language understanding, and mathematical reasoning benchmarks.

-

HELM [He et al., 2025]: Hyperbolic LLMs via Mixture-of-Curvature Experts. Proposes a mixture-of-experts architecture where different experts operate in spaces of different curvatures (hyperbolic, Euclidean, spherical), allowing the model to adaptively select the optimal geometry for each input.

-

Hyperbolic Token Embeddings: Replacing standard Euclidean token embeddings with hyperbolic embeddings can improve the representation of taxonomic and compositional relationships, especially benefiting tasks involving structured knowledge.

5.2 Hyperbolic Vision Foundation Models

Visual data exhibits hierarchical structure at multiple levels, ranging from pixels to textures, parts, objects, and scenes. Hyperbolic vision models exploit this:

- Hyperbolic Image Embeddings [Khrulkov et al., 2020]: Mapping image features to hyperbolic space for improved zero-shot and few-shot classification, especially when label hierarchies are available (e.g., ImageNet’s WordNet-based class taxonomy)

- MERU (Hyperbolic CLIP) [Desai et al., 2023]: Adapting CLIP-style contrastive learning to hyperbolic space, enabling better cross-modal alignment of hierarchical visual and linguistic concepts

- Hyperbolic ViT [Ermolov et al., 2022]: Vision Transformers with hyperbolic attention and position encodings

5.3 Hyperbolic Multi-Modal Models

Multi-modal foundation models (e.g., combining vision, language, and audio) benefit from hyperbolic geometry through:

- Cross-Modal Hierarchical Alignment: Different modalities often share hierarchical structure (e.g., a visual scene graph and a textual description both encode part-whole relationships). Hyperbolic space provides a natural shared geometry for aligning these hierarchies.

- Compositional Grounding: Hyperbolic representations can better capture the compositional nature of language and visual scenes, where meanings compose hierarchically.

- Efficient Multi-Modal Fusion: The parameter efficiency of hyperbolic representations reduces the overhead of multi-modal fusion layers.

5.4 Key Advantages

• Improved Knowledge Representation: Hyperbolic foundation models can more efficiently encode taxonomic knowledge, ontological relationships, and compositional semantics, potentially reducing parameter counts while improving performance on tasks requiring hierarchical understanding.

• Enhanced Reasoning Capabilities: The natural tree-like structure of hyperbolic space aligns with logical hierarchies and deductive reasoning patterns, potentially enabling more sophisticated chain-of-thought and structured reasoning abilities.

• More Efficient Scaling: The intrinsic capacity of hyperbolic space to represent exponentially more information within the same dimensionality suggests better scaling properties as model sizes increase. This is a critical factor given the computational cost of modern foundation models.

• Cross-Modal Hierarchical Alignment: For multimodal foundation models, hyperbolic representations may better align hierarchical structures across different modalities (e.g., visual scene graphs with linguistic parse trees), improving performance on complex cross-modal tasks.

6. Challenges and Opportunities

• Numerical Stability: Hyperbolic operations involve exponential and hyperbolic trigonometric functions that can lead to overflow/underflow, especially near the boundary of the Poincaré disk where the conformal factor $\lambda_{\mathbf{x}} = \frac{2}{1-\lVert \mathbf{x}\rVert^2}$ diverges. Techniques such as clipping norms, working in the Lorentz model, and mixed-precision training have been developed to mitigate these issues, but a general solution remains elusive.

• Optimization Difficulties: Riemannian optimization (RSGD, RAdam) in hyperbolic space is more complex than standard gradient descent. The curvature of the space introduces additional terms in the update rules, and learning rate sensitivity is amplified, since points near the boundary of the Poincaré disk experience much larger effective step sizes. Developing efficient, stable, and scalable Riemannian optimizers is an active research area.

• Scalability to Large Models: While hyperbolic methods have shown success at moderate scales, scaling to billions of parameters introduces engineering challenges. GPU-efficient implementations of hyperbolic operations, memory-efficient training strategies, and distributed Riemannian optimization are needed to bring hyperbolic methods to production-scale foundation models.

• Integration with Existing Architectures: Seamlessly combining hyperbolic components with traditional Euclidean deep learning modules presents both theoretical and implementation challenges. Hybrid approaches that selectively apply hyperbolic geometry to specific components (e.g., embedding layers or attention modules) while keeping other components Euclidean have shown promise as a practical middle ground.

• Theoretical Understanding: The theoretical foundations of hyperbolic deep learning are still developing. Key open questions include: generalization bounds for hyperbolic networks, the expressiveness gap between hyperbolic and Euclidean representations, optimal curvature selection strategies, and the approximation theory of functions on hyperbolic manifolds.

• Learnable Curvature: The curvature parameter $\kappa$ significantly affects representation quality, but it is often treated as a hyperparameter. Learning curvature jointly with model parameters, or using product manifolds with mixed curvatures, remains a promising but challenging direction.

• Extreme Efficiency in Hierarchical Tasks: For applications dominated by tree-like structures (taxonomies, ontologies, organizational charts), hyperbolic approaches could dramatically reduce model size while improving performance, achieving “more with less.”

• Novel Architectural Paradigms: Fully embracing non-Euclidean geometry may inspire fundamentally new neural network architectures beyond current paradigms, analogous to how the Transformer architecture revolutionized sequence modeling.

• Cross-Disciplinary Insights: The integration of differential geometry, algebraic topology, and Riemannian optimization with deep learning opens possibilities for importing powerful techniques from mathematics and theoretical physics into machine learning.

• Biologically Inspired Representations: Growing evidence suggests that biological neural systems may utilize hyperbolic-like representations for spatial navigation and conceptual organization. This connection offers insights for neuromorphic computing and cognitive architectures.

• AI for Science: Hyperbolic representations are particularly well-suited for scientific data with inherent hierarchical structure, including molecular graphs, protein folding hierarchies, phylogenetic trees, and multi-scale physical simulations.

7. Conclusion

Hyperbolic geometry provides a powerful mathematical framework for enhancing deep learning systems, particularly for applications involving hierarchical, tree-like structures that are ubiquitous in natural language, knowledge representation, computer vision, and scientific data. The intersection of hyperbolic geometry and artificial intelligence represents a frontier where mathematical elegance meets practical utility, potentially addressing fundamental limitations of traditional Euclidean approaches.

As research progresses, we can expect hyperbolic methods to become increasingly integrated into mainstream deep learning systems, particularly for knowledge-intensive applications requiring multi-scale reasoning. The development of stable, efficient hyperbolic operations and their thoughtful integration into neural architectures promises significant advances in AI’s ability to represent and reason about complex hierarchical structures.

Key directions to watch include: (1) scaling hyperbolic foundation models to billions of parameters, (2) mixed-curvature and product manifold approaches that adaptively combine different geometries, (3) hyperbolic methods for reasoning and planning in LLM agents, and (4) applications in AI for science where hierarchical structure is a first-class concern.

We invite the community to join us in advancing this exciting research direction. Please refer to our events page for upcoming workshops and tutorials, and our collection page for a comprehensive literature reference.

Contributors

Menglin Yang, Neil He, Hiren Madhu, Ngoc Bui, Ali Maatouk, Rishabh Anand, Yifei Zhang, Jialin Chen, Jiahong Liu, Bo Xiong, Min Zhou, Irwin King, Melanie Weber, Rex Ying

Invited Speakers: Philip S. Yu, Shirui Pan, Min Zhou, Pascal Mettes, Smita Krishnaswamy

References

- Adcock, A. B., Sullivan, B. D., & Mahoney, M. W. (2013). Tree-like structure in large social and information networks. ICDM.

- Bachmann, G., Bécigneul, G., & Ganea, O. (2020). Constant Curvature Graph Convolutional Networks. ICML. arXiv:1911.05076

- Bécigneul, G., & Ganea, O. (2019). Riemannian Adaptive Optimization Methods. ICLR. arXiv:1810.00760

- Bonnabel, S. (2013). Stochastic Gradient Descent on Riemannian Manifolds. IEEE Transactions on Automatic Control, 58(9).

- Chami, I., Ying, R., Ré, C., & Leskovec, J. (2019). Hyperbolic Graph Convolutional Neural Networks. NeurIPS. arXiv:1910.12933

- Chen, W., Han, X., Lin, Y., Zhao, H., Liu, Z., Li, P., Sun, M., & Zhou, J. (2022). Fully Hyperbolic Neural Networks. ACL. arXiv:2105.14686

- Desai, K., Nickel, M., Rajpurohit, T., Johnson, J., & Vedantam, R. (2023). Hyperbolic Image-Text Representations (MERU). ICML. arXiv:2304.09172

- Ermolov, A., Mirvakhabova, L., Khrulkov, V., Sebe, N., & Oseledets, I. (2022). Hyperbolic Vision Transformers: Combining Improvements in Metric Learning. CVPR. arXiv:2203.10833

- Ganea, O., Bécigneul, G., & Hofmann, T. (2018). Hyperbolic Neural Networks. NeurIPS. arXiv:1805.09112

- Gromov, M. (1987). Hyperbolic Groups. In Essays in Group Theory, MSRI Publ., Springer.

- Gu, A., Sala, F., Gunel, B., & Ré, C. (2019). Learning Mixed-Curvature Representations in Product Spaces. ICLR. OpenReview

- He, N., Yang, M., et al. (2025). HELM: Hyperbolic Large Language Models via Mixture-of-Curvature Experts. NeurIPS. arXiv:2505.24722

- Khrulkov, V., Mirvakhabova, L., Ustinova, E., Oseledets, I., & Lempitsky, V. (2020). Hyperbolic Image Embeddings. CVPR. arXiv:1904.02239

- Liu, Q., Nickel, M., & Kiela, D. (2019). Hyperbolic Graph Neural Networks. NeurIPS. arXiv:1910.12892

- Nickel, M., & Kiela, D. (2017). Poincaré Embeddings for Learning Hierarchical Representations. NeurIPS. arXiv:1705.08039

- Nickel, M., & Kiela, D. (2018). Learning Continuous Hierarchies in the Lorentz Model of Hyperbolic Geometry. ICML. arXiv:1806.03417

- Sarkar, R. (2011). Low Distortion Delaunay Embedding of Trees in Hyperbolic Plane. Graph Drawing.

- Shimizu, R., Mukuta, Y., & Harada, T. (2021). Hyperbolic Neural Networks++. ICLR. arXiv:2006.08210

- Ungar, A. A. (2008). Analytic Hyperbolic Geometry and Albert Einstein's Special Theory of Relativity. World Scientific.

- Yang, M., Verma, H., Zhang, D. C., Liu, J., King, I., & Ying, R. (2024). Hypformer: Exploring Efficient Transformer Fully in Hyperbolic Space. KDD. arXiv:2407.01290

- Yang, M. et al. (2025). Hyperbolic Fine-tuning for Large Language Models (HypLoRA). NeurIPS.

Citation

If you find this webpage useful, please consider citing our work:

@article{yang2026hyperbolic,

title = {Hyperbolic Geometry and Non-Euclidean Representations

for Large Language Models},

author = {Yang, Menglin and He, Neil and Madhu, Hiren and

Bui, Ngoc and Maatouk, Ali and Anand, Rishabh and

Zhang, Yifei and Chen, Jialin and Liu, Jiahong and

Xiong, Bo and Zhou, Min and King, Irwin and

Weber, Melanie and Ying, Rex},

year = {2026},

url = {https://hyperboliclearning.github.io}

}